Corrupting Your TS Streams

Reading time ~3 minutes

This post sheds some light on possible reasons for deliberate corruption of transport streams and demonstrates how useful it may be when performing testing and troubleshooting tasks.

Causing some deliberate problems

During TechCon, I talked about how we are opening up some tools to the world and how some of these tools can be used for troubleshooting issues.

The demo scenario I talked about used the two initial Apache-licensed tools we’ve added to our GitHub pages.

In the presentation, I used a small Cinegy tool called NetSend to push the saved MPEG-2 Transport Stream (TS) file to my network. However, you can use something like Multicast (on Linux) or VLC on pretty much anything to generate a sensible stream. Or you can use any of our software that outputs IP. Or an actual device like an IP-enabled IRD or a consumer box like HDHomeRun.

Anyway, let’s assume you have a source of MPEG-2 TS data streaming around, and you want to muck around and corrupt it.

But Lewis, why would I corrupt my transport stream???

Testing requires that you check out situations that are not ideal, as well as situations that work correctly. So, if you are trying to test something that implements error correction, or you want to test something that reads a TS that can stand up to abuse, having a way to cause some problems that don’t involve waving things in front of your satellite dish LNB (don’t laugh, I’ve done that) can be really useful.

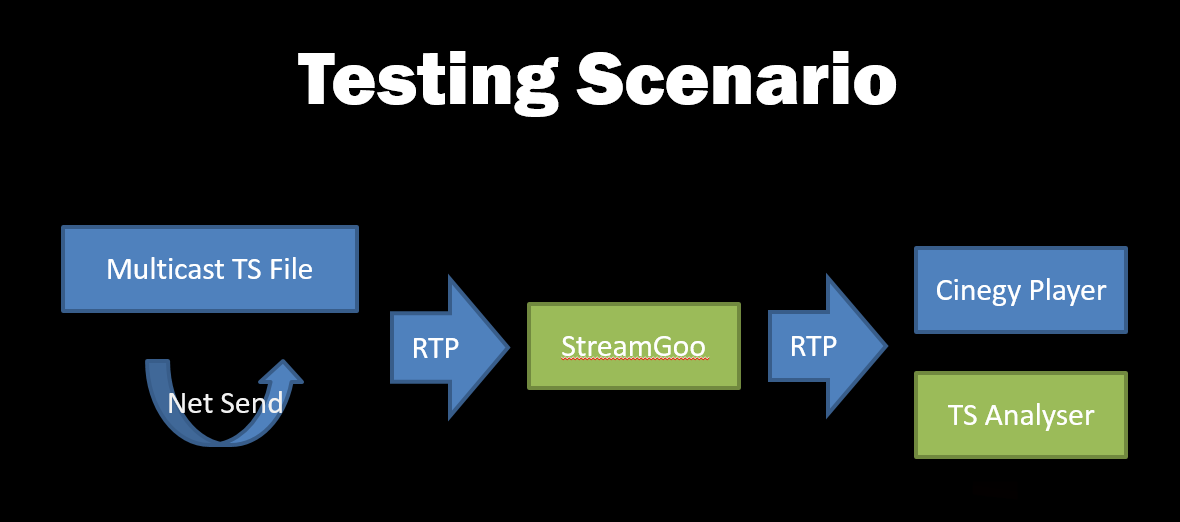

So, let’s consider that we want to verify that our validator tool sees errors, and that Cinegy Player can read it (free trial here). Here’s the setup scenario:

To perform this demo, you’ll need to grab a build of TsAnalyser and StreamGoo ‒ available here and here.

Once you download these tools, let’s assume that you’ve got a machine ready to go, that can see a working multicast stream available in RTP, available here: rtp://239.1.1.1:1234.

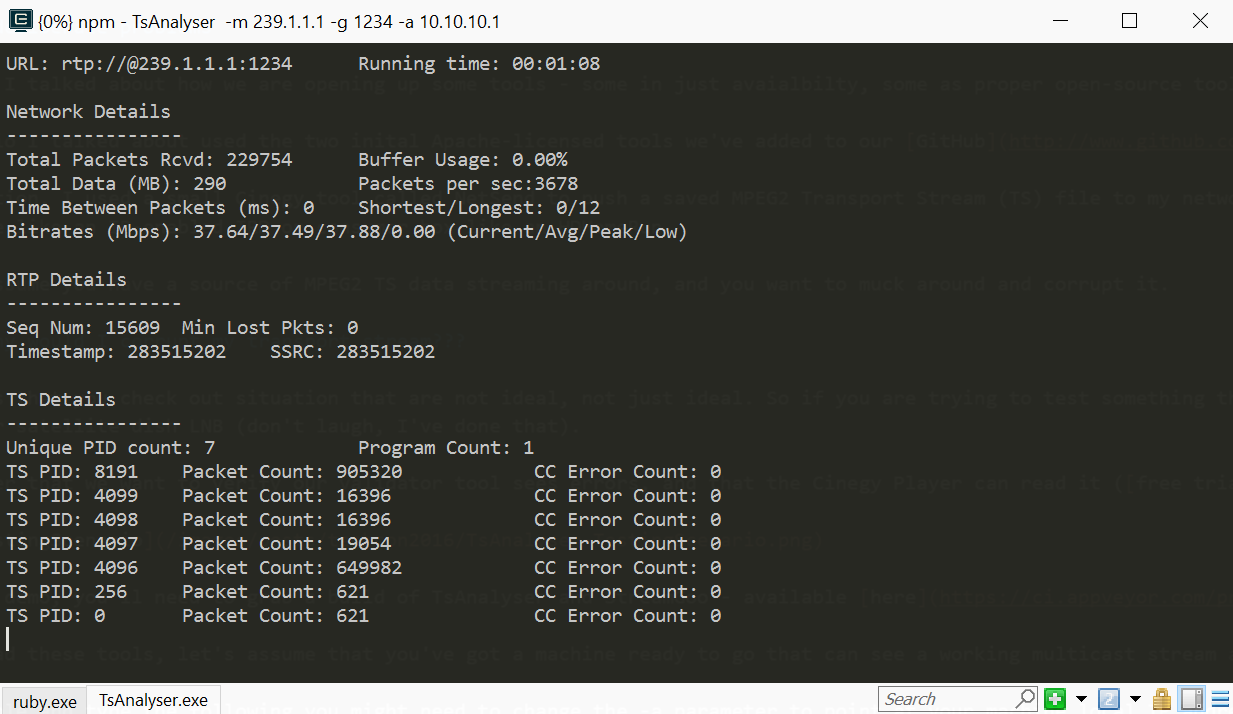

Pop open a console and type the following (you might need to change the -a parameter to point at your machine’s local IP address that receives the multicast):

TsAnalyser -m 239.1.1.1 -g 1234 -a 10.10.10.1That done, you should see something like this:

So – that’s all working, and we have no errors! Yay! Let’s see if we can do something about that…

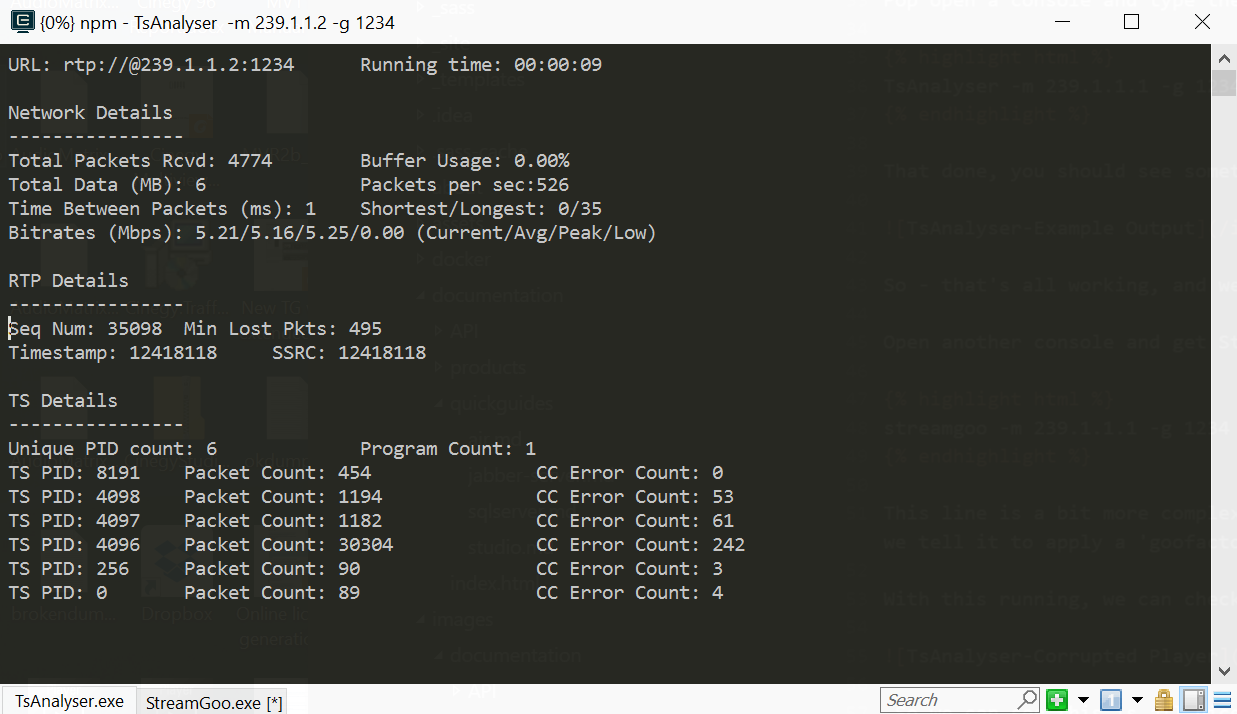

Open another console and get StreamGoo running in the middle of the chain, and start it throwing some things away.

streamgoo -m 239.1.1.1 -g 1234 -n 239.1.1.2 -h 1234 -a 10.10.10.1 -b 10.10.10.1 -f 20 -t 4This line is a bit more complex, but actually, it’s pretty simple. It’s just listening like TsAnalyzer (-m and -g options), but it has arguments indicating that the stream should be output to 239.1.1.2 (-n and -h options). It also has input/output adapters specified (-a and -b options). Finally, we tell it to apply a 'goofactor' (-f option) of 20 ‒ meaning roughly 20 in every 1000 packets will be corrupted ‒ along with a 'gootype' (-t option) of 4 ‒ meaning drop packets.

With this running, we can check what this does to the playing stream:

So, we can see this is all nicely screwed up ‒ but what does the analyzer show?

Excellent! We can see a load of lost packets! You might think this is a bit of a strange thing to have done, but as someone who has worked for some years troubleshooting and dealing with video over IP, there really is something to that phrase "know your enemy"!